SEO Visibility vs. AI Visibility: How to Measure and Track Both

Search visibility used to mean one thing: where you rank on Google. Not anymore.

Today, visibility plays out across two surfaces: traditional search and AI-powered surfaces. Even within Google, the rules are shifting fast. AI Overviews now answer questions directly on the results page, meaning your ranking no longer guarantees the clicks it used to.

And beyond Google entirely, a growing share of discovery happens inside LLMs, driven by brand mentions, citations, and conversational answers.

The result? Your brand could rank well and still be missing from half the conversation. Understanding, measuring, and tracking both SEO and AI visibility is no longer optional.

SEO visibility determines whether you can be found. AI visibility determines whether you are selected.

Part 1: Understanding Visibility

What is SEO visibility?

SEO visibility refers to how visible your website is in search results over time, based on the rankings of your tracked keywords. The higher and more consistent your rankings, the greater your visibility.

It’s a proxy metric, not traffic or revenue, but a leading indicator of SEO performance.

It helps isolate the impact of SEO efforts from external factors like seasonality or brand campaigns.

But two important nuances often get overlooked:

Visibility ≠ traffic: high visibility means your pages have the potential to attract clicks, but it doesn’t mean users actually visit your site. Click-through behavior depends on many factors beyond rankings.

Rankings ≠ actual on-screen visibility: SEO visibility is based on rankings, but here’s the catch: as a growing share of queries now return AI-generated answers directly on the results page, alongside other SERP features, rankings no longer guarantee the same level of exposure they once did. Your position might stay the same, while your result is pushed further down the page.

What Is AI Visibility?

AI visibility refers to how often and how positively your brand appears in AI-generated responses. The more consistently and prominently your brand appears, the greater your AI visibility.

It's a leading indicator of brand authority and competitive positioning in AI-generated answers.

It reveals which topics AI platforms associate with your brand and how favorably.

AI systems retrieve relevant information, evaluate its usefulness and trustworthiness, and then synthesize it into a final answer, meaning visibility depends on whether your content is selected and included, not just ranked.

How does AI visibility relate to SEO visibility?

The two are connected but not interchangeable. Ranking well in traditional search improves your chances of appearing in AI answers, but it doesn't guarantee it.

While both traditional search engines and LLMs use entity recognition and contextual relevance, AI systems go further by using these signals to retrieve, evaluate, and synthesize information, not just rank pages.

That said, SEO best practices remain the foundation for both.

What Makes AI Visibility Different?

AI visibility behaves differently from traditional search visibility in two key ways:

AI visibility ≠ stable visibility: presence in AI responses fluctuates significantly over time. A study tracking 481 websites across ChatGPT, Perplexity, and Google AI Overviews found that only 49% of brands remained consistently visible across all platforms over three weeks, the rest either dropped out entirely or appeared sporadically.

AI visibility ≠ universal visibility: visibility is fragmented across platforms. The same study shows that appearing in one system doesn’t guarantee presence in others like ChatGPT, Perplexity, or Gemini.

Part 2: Measuring Visibility

How Is SEO Visibility Measured?

SEO visibility is calculated from your rankings across a set of tracked keywords. While different tools use slightly different formulas, most approaches rely on similar inputs, ranking position, search demand, and weighting systems.

Common visibility models

Points-based: assigns weighted points to each ranking position. In Advanced Web Ranking, rankings in the top 30 receive points, with higher positions earning more (e.g., position 1 = 30 points, position 2 = 29 points, etc.). This model focuses purely on ranking performance, making it stable and easy to compare over time.

Percentage-based: expresses visibility as a share of total possible visibility. In Advanced Web Ranking, this is calculated by normalizing the same weighted points system against a hypothetical #1 ranking for every keyword.

Index-based: presents visibility as a scaled score or index. While often treated as a separate category, it typically relies on similar inputs as percentage-based models, with the main difference being how the score is calculated and displayed.

Pixel-based: measures actual on-screen visibility by analyzing SERP layout and how much space your listing occupies. This approach is becoming increasingly important as AI Overviews and other SERP features push organic results further down the page. In Advanced Web Ranking, pixel-based visibility is calculated both as a score and as percentage.

Which model is better?

There’s no single “best” model as each captures a different aspect of visibility, from rankings to actual on-screen presence. The most accurate view comes from combining them.

Model | What does it tell you | Limitations |

|---|---|---|

Points-based | “How much visibility did I earn?” (absolute value) | Based on ranking position only; ignores search demand and is influenced by the size and composition of your keyword set |

Percentage-based (normalized) | “What share of total possible visibility do I have?” (relative performance) | Based on ranking position only; ignores search demand and reflects performance within your keyword set, not real-world impact |

Index-based | “How can I compare visibility using a simplified score?” (normalized comparison) | Scores differ across tools; hard to interpret without context |

Pixel-based | “Is my result actually seen on the page?” (real exposure) | Newer approach, less standardized across tools |

How Is AI Visibility Measured?

As dedicated tools for tracking AI visibility are still emerging, the underlying signals that reveal how visible a brand truly is in AI-generated answers are already becoming clear:

Mentions: whether your brand appears at all in the AI-generated response. They indicate basic presence and inclusion and show how often you are surfaced across prompts and topics. If you’re not mentioned, you’re not visible.

Citations: whether your content is referenced or used as a source to support the answer. They show if your website is used to support or validate information and indicate trust and factual authority. Also, they highlight how often you are cited compared to competitors.

Framing: how your brand is described, recommended, and perceived. It reflects whether you are positioned as an authority, an option, or a secondary choice. Includes whether the sentiment is positive, neutral, or negative. Reveals how AI systems interpret your credibility and expertise.

In practice, these signals are analyzed across multiple prompts and topics to understand a brand’s overall visibility coverage in AI-generated results.

Learn more about the difference between citations and mentions in AI search in this article.

Advanced Web Ranking acts as both an SEO visibility tracking tool and an AI visibility tracker, helping you monitor how your brand performs across traditional search and AI-generated answers. It functions as a complete SEO visibility checker and AI search visibility checker, giving you a unified view of your performance across both environments.

Part 3: Tracking Visibility in AWR

Analyze Your SEO Visibility in AWR

Tracking visibility effectively means going beyond rankings and understanding how your performance varies across keywords, competitors, and SERP environments. Advanced Web Ranking provides multiple reports that help you analyze visibility from different angles.

1. Track your overall visibility performance

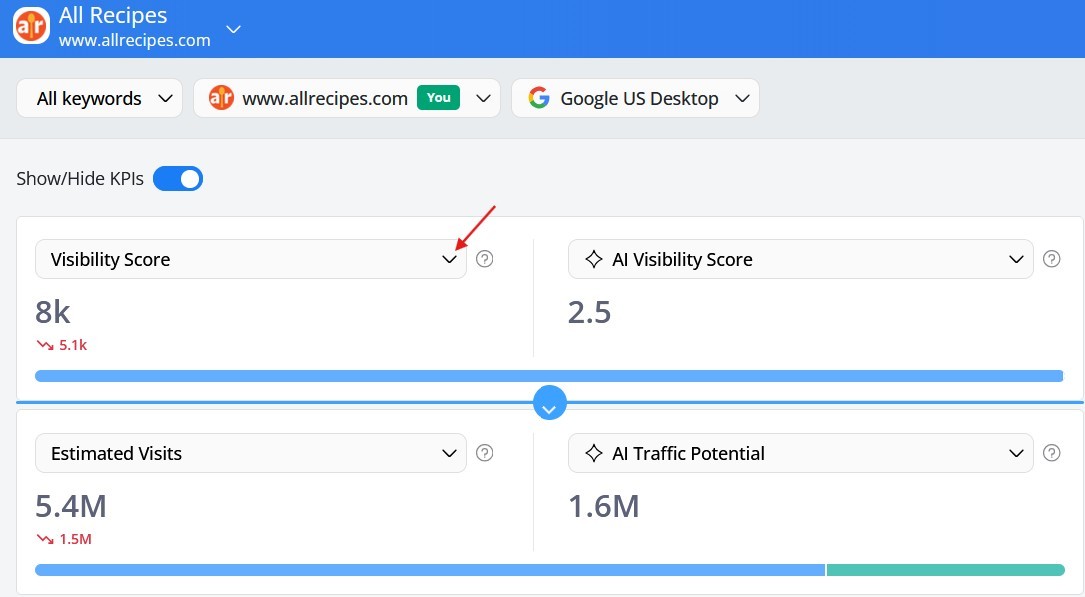

The Keyword Ranking report is where you monitor your visibility across your tracked keywords. Visibility Score, Visibility Percent, and Average Rank are aggregate metrics across your entire keyword set. They tell you how you're performing overall.

Choose the visibility metric you want to analyze from the dropdown.

Each metric helps you understand a different aspect of performance:

Visibility Score → overall visibility trend

shows whether your total visibility is increasing or decreasing

highlights the impact of ranking changes at a high level

Visibility Percent → performance efficiency

shows how much of your total potential visibility you’re capturing

helps evaluate how close you are to your maximum visibility

Average Rank → ranking movement

shows how your rankings shift across keywords

helps explain why visibility increases or drops

Tip: Quickly compare your SEO performance metrics (Visibility Percent, Estimated Visits) with their AI counterparts (AI Visibility Percent, AI Traffic Potential) side by side to get a complete view of your performance across both surfaces.

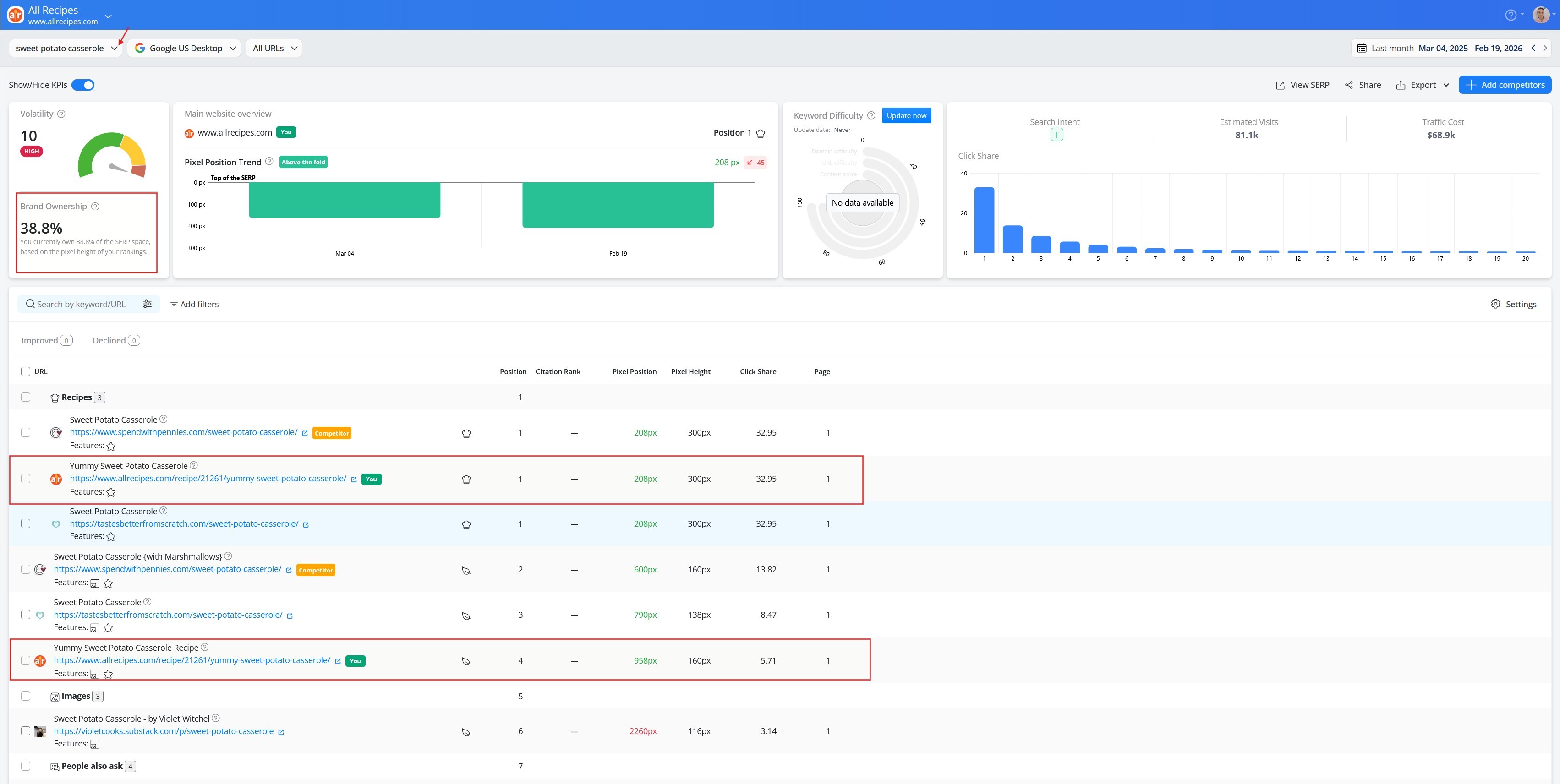

2. Understand your real on-page presence

Further down in the report, Pixel Position and Visibility Distribution show how visible your result actually is on the SERP for each keyword. The real on-page placement can be very different from what your ranking position suggests, depending on what SERP features sit above it.

Pixel Position

shows the exact distance (in pixels) from the top of the SERP to your listing

reveals how far users need to scroll to reach it

Visibility Distribution → page position as a percentage

translates pixel distance into a percentage to show how close your listing is to the top of the SERP

highlights where your visibility is stronger or weaker than your rank suggests

Explore a detailed breakdown of Pixel Position and Visibility Distribution.

3. Measure your brand's dominance per keyword

If you rank multiple times for a keyword, Brand Ownership shows how much of the SERP visibility your brand truly controls for that specific keyword by measuring the total pixel space your listings occupy across all your ranking URLs. The metric is available in the Top Sites report.

Brand Ownership → SERP dominance per keyword

shows how much of the SERP visibility your brand controls for a specific keyword.

helps identify where adding another ranking URL would have the biggest impact on your overall visibility

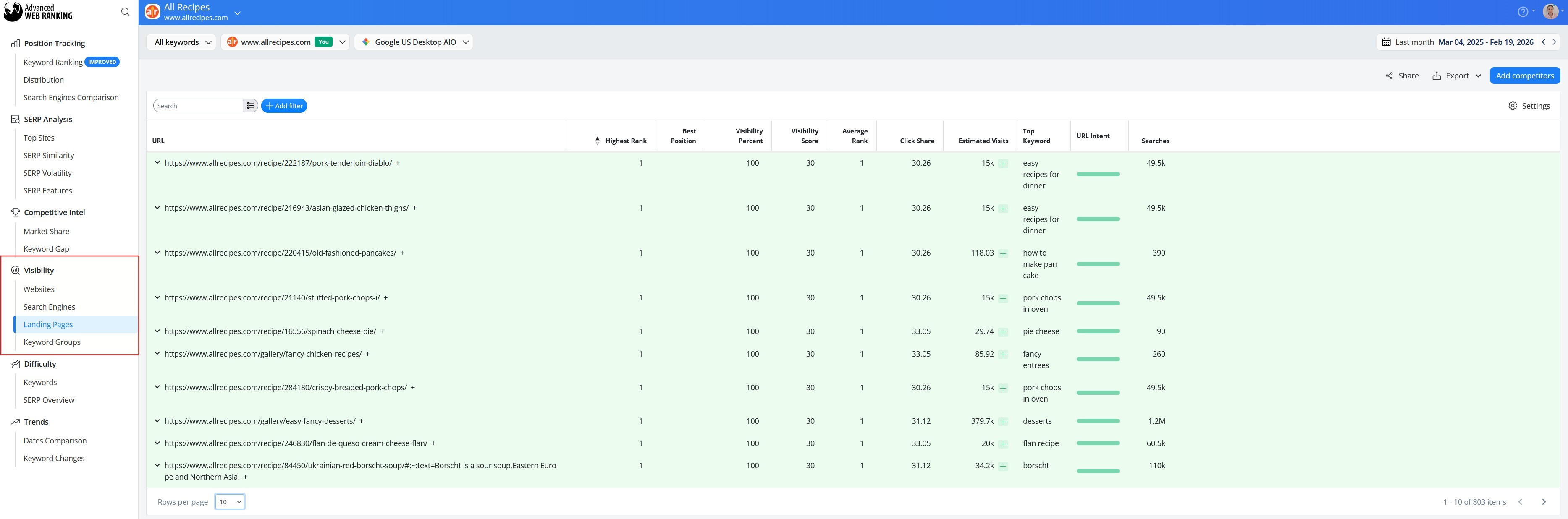

4. Explore visibility across multiple dimensions

Visibility Score, Visibility Percent, Average Rank, and Visibility Distribution can be further analyzed in the Visibility section, where four dedicated reports let you compare them across:

search engines (desktop, mobile, and AI-powered results such as Google AI Overviews or AI search engines)

Analyze Your AI Visibility in AWR

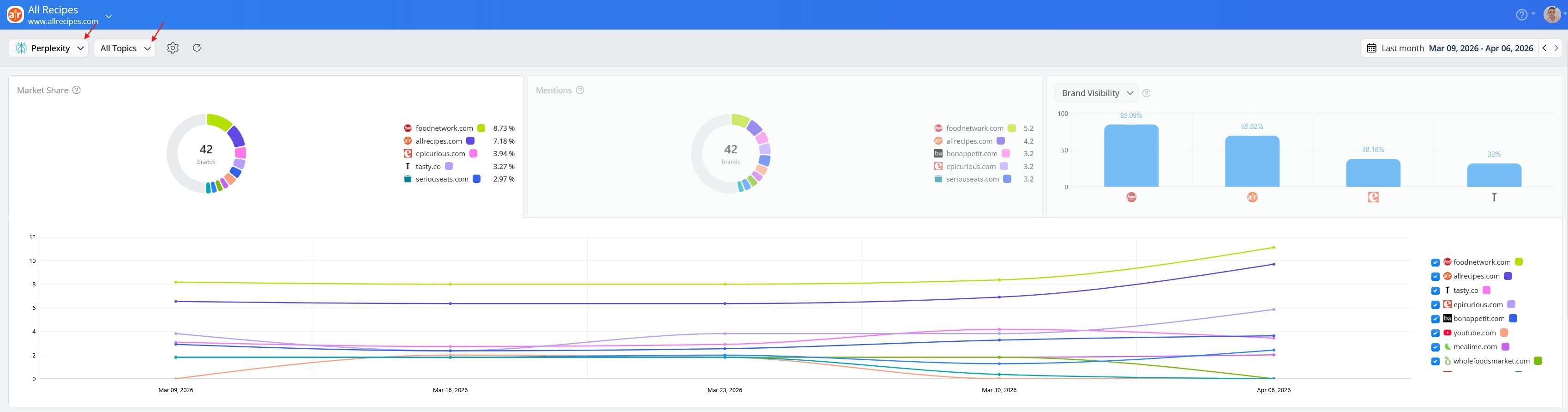

While mentions, framing, and citations form the foundation of AI visibility, Advanced Web Ranking goes further by tracking factors such as market share, brand visibility, topic coverage, and citation rank, offering a more complete picture of how your brand appears across AI-generated answers.

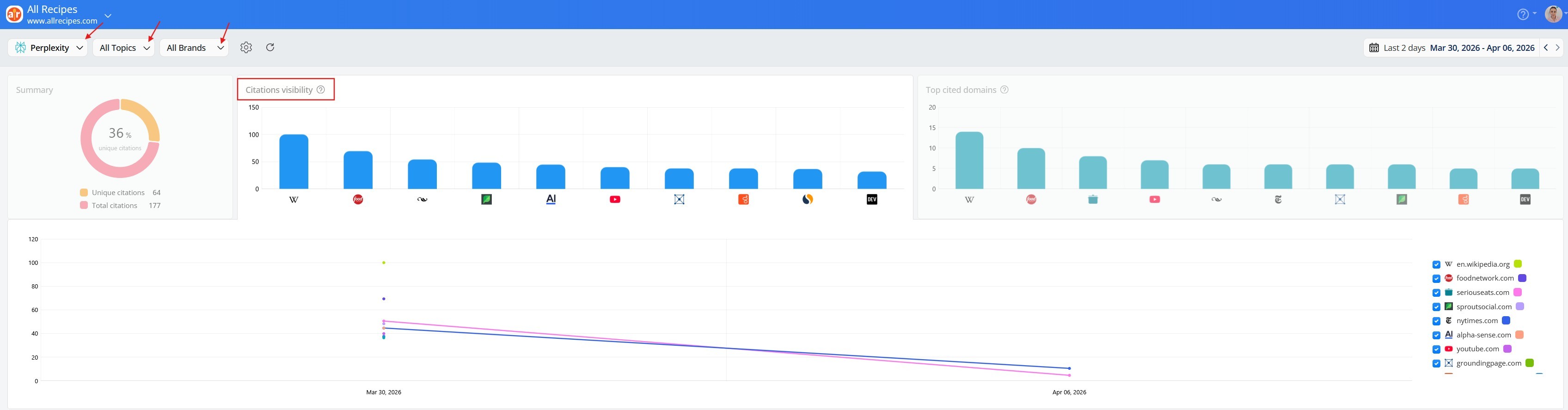

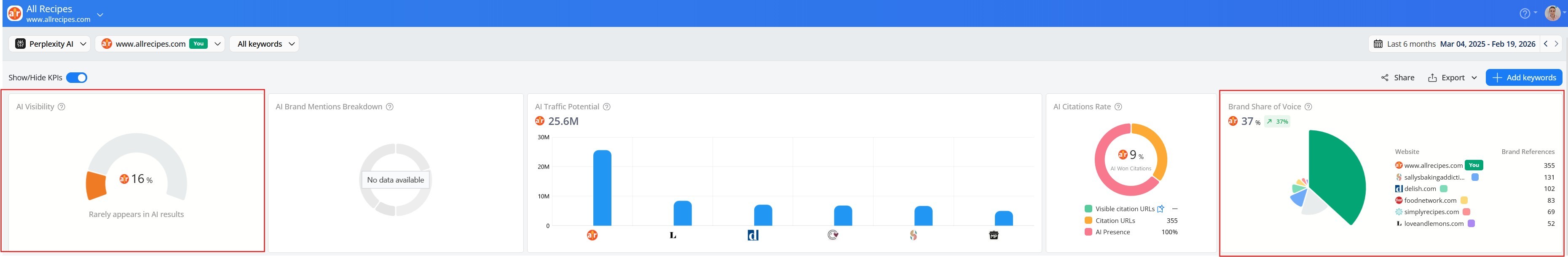

1. Monitor your brand's AI presence

The AI Brand Visibility → Brands report is your starting point for understanding how your brand performs across the selected LLMs and topics. It surfaces three KPIs, each showing all brands present in the results, including yours, even if it doesn't appear in the top 10.

Market Share → your share of AI visibility vs. competitors

shows your rank-weighted share of total AI visibility and brands appearing more often and ranking higher earn a larger share.

it’s a relative metric, and it helps you understand how your visibility compares to competitors.

Mentions → how often your brand appears in AI-generated responses

counts the total number of times your brand is mentioned in AI-generated answers (more mentions = greater presence).

helps you compare how frequently your brand appears versus competitors.

Brand Visibility → how prominently your brand stands out

reflects how high your brand appears in AI-generated answers (higher positions = higher visibility).

It’s an absolute metric based on your own rankings, allowing direct comparison with competitors.

2. Move to brand-level analysis

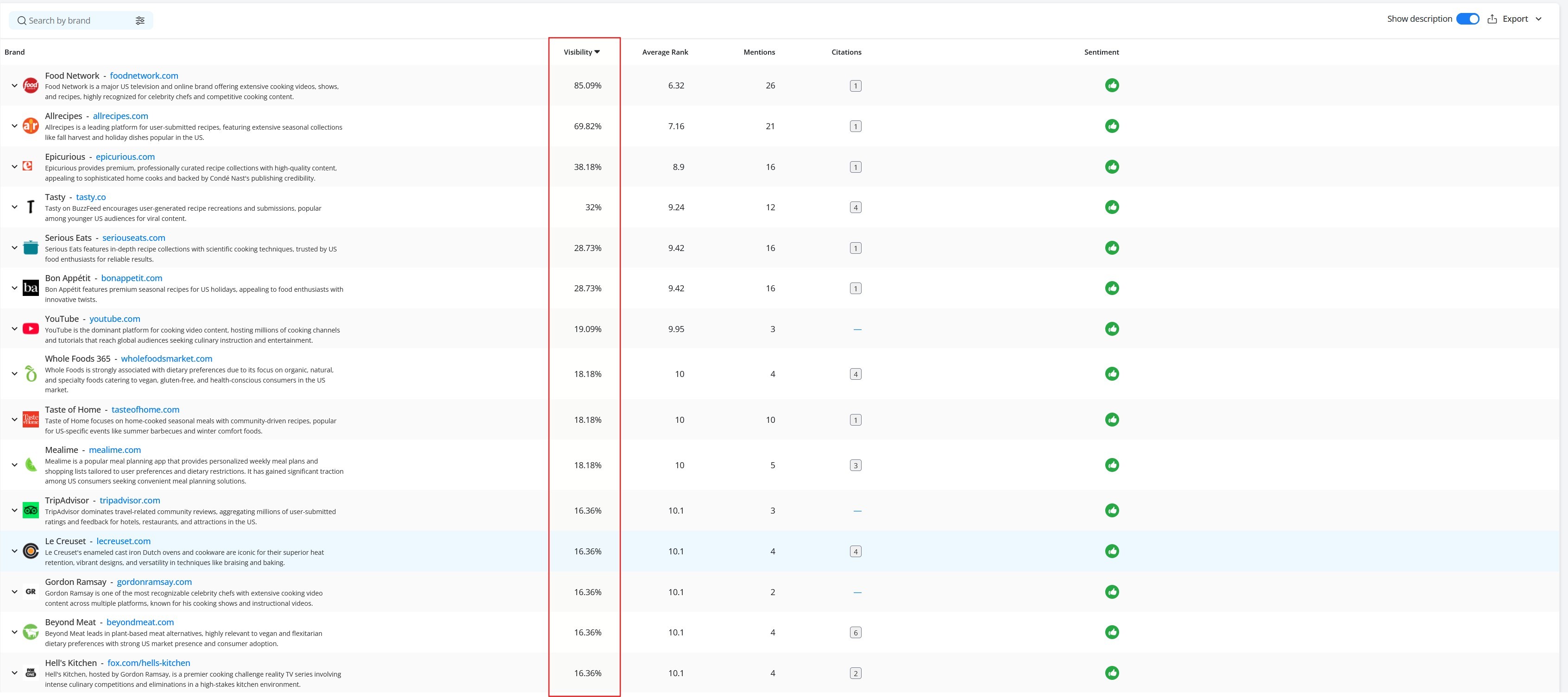

As you scroll down, you’ll find Visibility listed for all brands identified for your selected LLM and topics, showing how prominently each brand appears. Average Rank, Mentions, Citations, and Sentiment provide additional context around that visibility.

Average Rank: shows your brand's typical position across topics. Lower is better, as it means your brand consistently appears near the top of AI-generated responses.

Mentions: shows how often a brand appears

Citations: shows how many unique sources are referenced

Sentiment: shows how your brand is perceived

3. Discover your topic associations

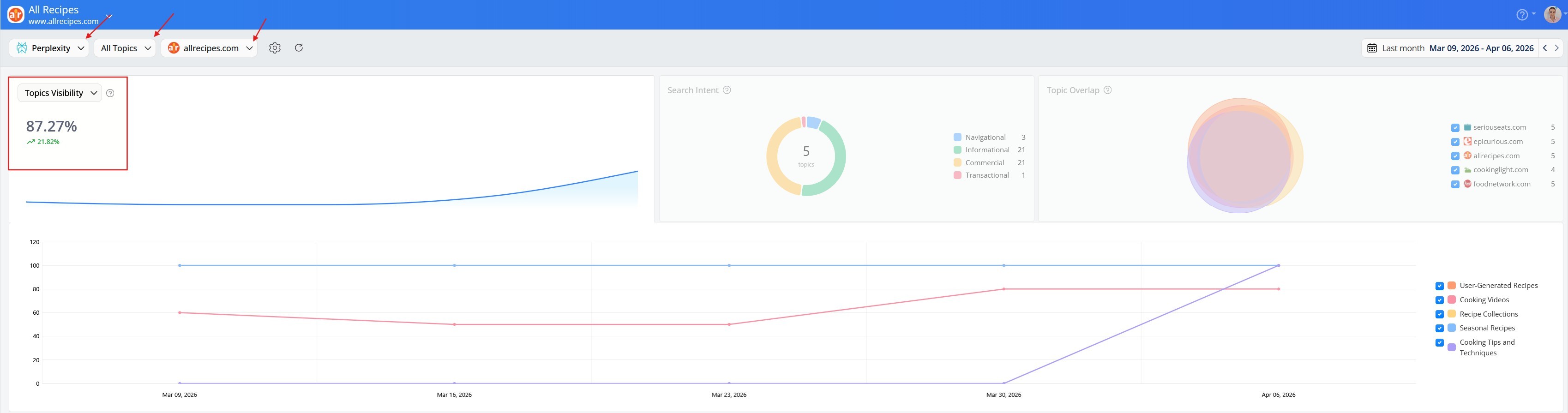

The AI Brand Visibility → Topics report flips the lens from brands to topics, revealing which topics AI platforms connect to your brand and how visible your brand is within them.

Topics Visibility → how prominently your brand appears across all topics

measures your overall visibility score across all retrieved topics

computed for the latest date in the selected range, showing a quick current-state snapshot

For a more granular view, the table breaks down performance topic by topic.

Topic Visibility → how prominently your brand appears within each individual topic

measures the visibility percentage for each individual topic

averaged across all updates in the selected date range — a more granular, time-based view per topic

Average Rank → how consistently your brand leads within each individual topic

shows your brand's typical position for each individual topic, averaged across the selected date range

lower is better. A rank of 1 means you lead that topic, while 6 means you're present but not dominant

For a complete walkthrough of the Brands and Topics reports, check out this article.

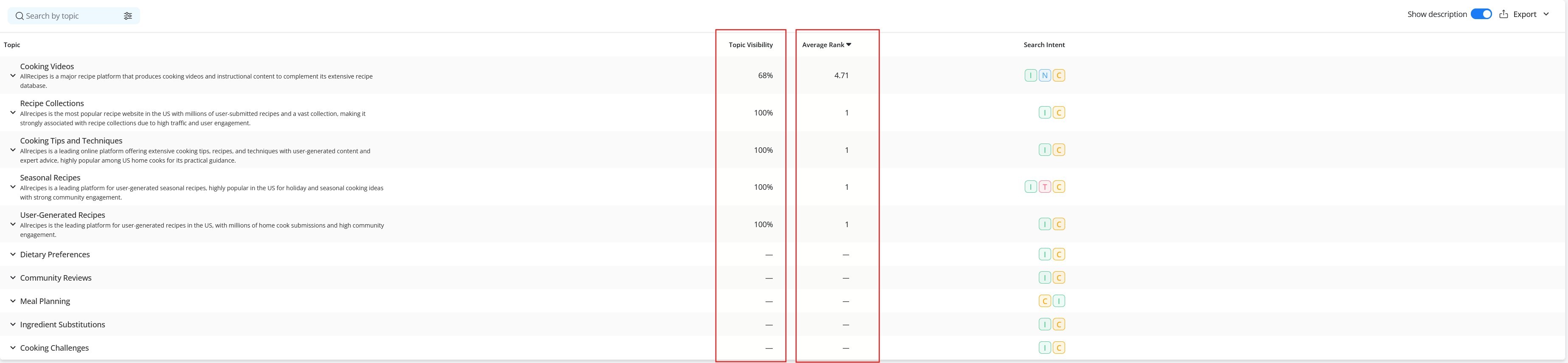

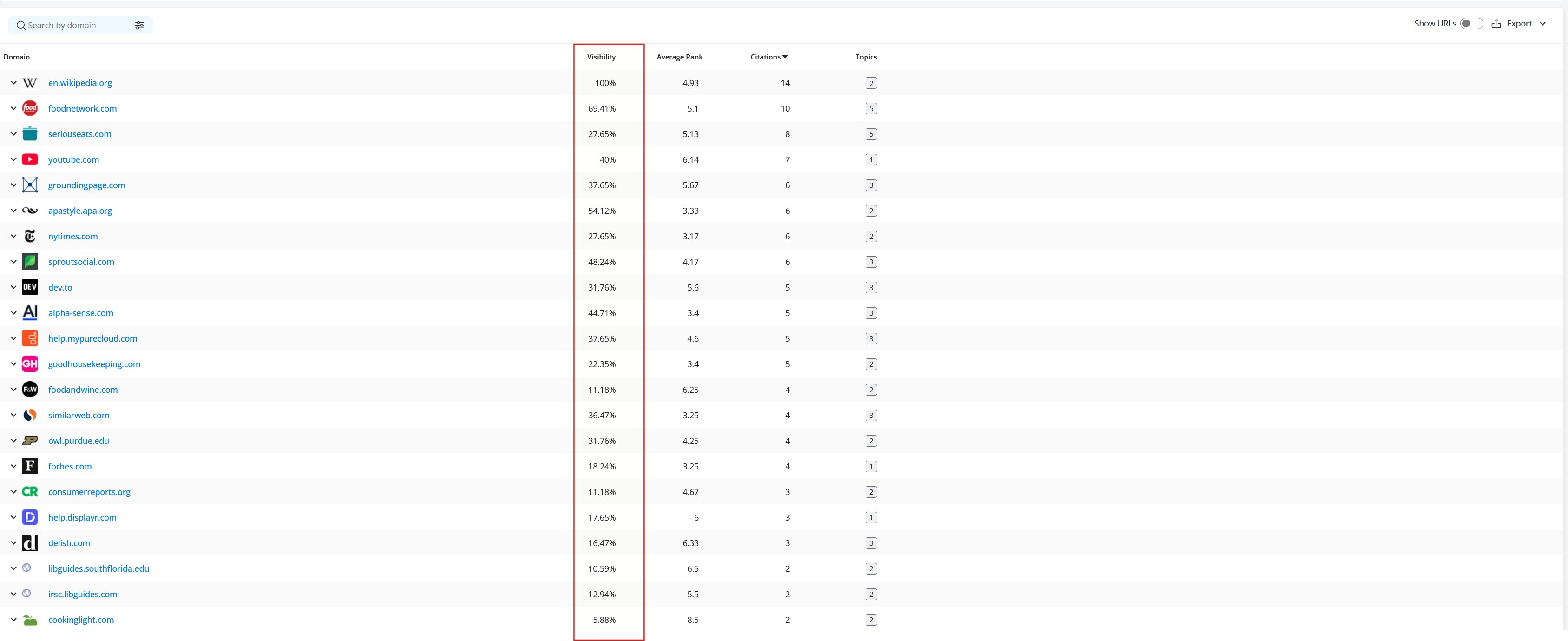

4. Understand which sources AI trusts

The AI Brand Visibility > Citations report shows all domains being used as sources by LLMs when generating answers for your tracked topics. Like the Brands report, you can filter by LLM and topic, but you can also filter by brand.

Citations Visibility → how consistently your content ranks among top cited sources

reflects how frequently your domain appears in the top 10 citations across the selected LLM and topics.

helps identify whether your content is consistently referenced as a top source or only occasionally.

In the table view below, Average Rank and Citations add context to that visibility:

Average Rank: your typical position across all citations, not just the top 10. Lower values indicate better visibility and a stronger presence.

Citations: total number of times your domain was cited.

Together they paint a complete picture:

High Citations Visibility + Low Average Rank = consistently visible and well-positioned

Low Citations Visibility + High Citations = cited often but rarely in the top 10

High Average Rank + Low Citations = rarely cited and poorly positioned

For a deeper dive into the Citations report, check out this article.

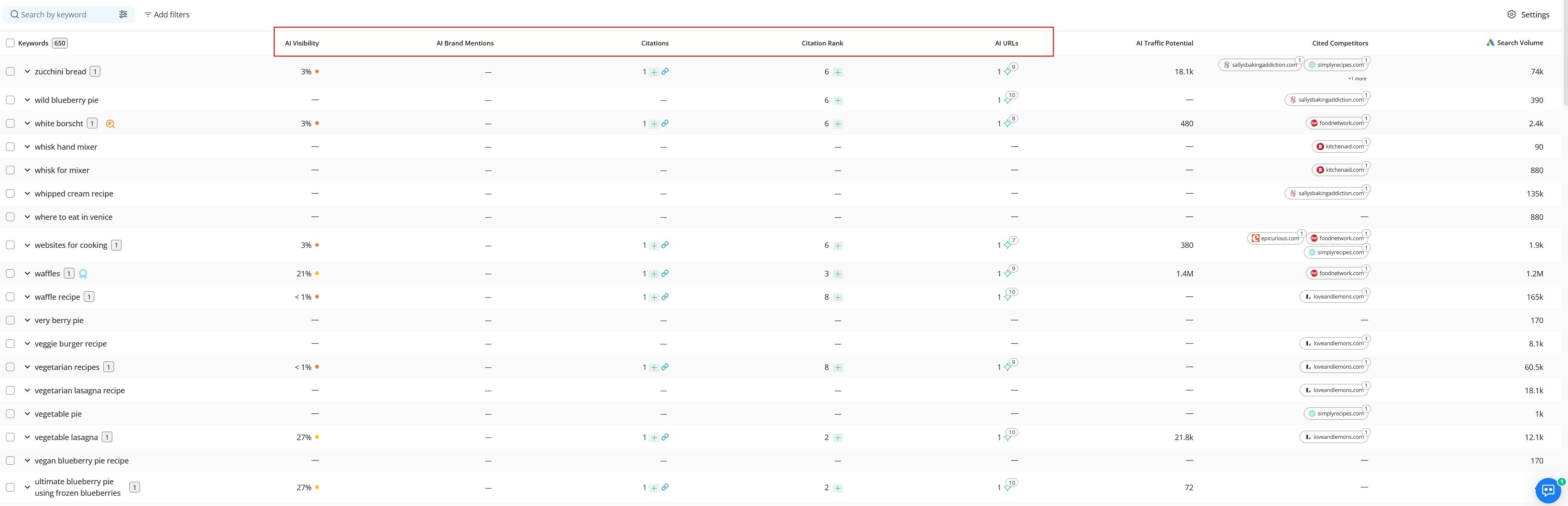

5. Track AI visibility keyword by keyword

The AI Search Tracking → Keyword Performance report goes deeper, showing how your brand performs inside AI-generated results for each specific keyword you track. It measures whether AI models are citing you, mentioning you, and how prominently they're doing so relative to your competitors.

AI Visibility % → your overall AI footprint

shows the percentage of total AI visibility your brand captures across all keywords, combining mentions and citation rankings into a single score

use performance levels to benchmark: Excellent (>60%), Good (35–60%), Fair (20–35%), Poor (<20%)

Brand Share of Voice → your competitive AI visibility

shows your share of total AI mentions across all tracked competitors, including mentions and citation URLs

indicates how your visibility stacks up against competitors targeting the same keywords

In the table below, AI Visibility breaks down per keyword, along with other important brand visibility metrics:

Citations → how often your content is used as a cited source

counts how often your website is listed as a cited source per keyword, including the specific URLs cited, since a single keyword may reference multiple pages from your site

higher counts indicate greater trust and usage by AI

AI Brand Mentions → how often your brand is referenced in AI answers

counts brand mentions in AI-generated responses (text or links)

higher counts show stronger presence in AI conversations

The table also includes supporting metrics that add context to your AI visibility:

Citation Rank: shows your position within the citation group

AI URLs: shows the total count of all ranking URLs in the AI result, including duplicates, unlike AI Brand Mentions and Citations, which count each reference only once.

Wrapping Up

SEO visibility and AI visibility are two different measures of the same fundamental goal: being found and chosen by your audience.

Traditional search rankings still matter and strong SEO remains the foundation of any visibility strategy. But AI-generated answers are increasingly where discovery happens, and brands that only track one surface are missing half the picture.

The good news: you don't need two separate strategies. You need one strategy that's built to perform on both surfaces and the right tools to measure how well it's working.

Advanced Web Ranking acts as both an SEO visibility tracking tool and an AI visibility tracker, helping you monitor how your brand performs across traditional search and AI-generated answers. It functions as a complete SEO visibility checker and AI search visibility checker, giving you a unified view of your performance across both environments.

Article by

Irina Diaconu

I’m a passionate content writer who loves researching and exploring new topics. With a keen eye for detail, I am dedicated to creating well-informed pieces that captivate and inform readers. Sharing knowledge and arousing curiosity is at the heart of my writing journey.