AI Brand Mention Volatility Study

This post first appeared on the FCDC Blog (Freelance Coalition for Developing Countries), and we’re excited to share it here on the AWR Blog with permission. The article was created for the FCDC × AWR Tool Jam competition and was edited by Chima Mmeje and Michelle Tansey.

Earning brand mentions in AI-generated responses is the new hot thing, but sustaining visibility is increasingly complex as volatility remains high.

This inconsistency makes it more challenging for marketers and SEOs to establish trust and attract qualified traffic from LLMs.

I tracked 481 websites across ChatGPT, Perplexity, and Google’s AI Overviews to understand brand mention volatility.

What I found was eye-opening. This article breaks down which types of brands were most volatile, how AI visibility compares to SERP rankings, and the practical steps you can take to build more stability in this new search layer.

Top takeaways

AI brand mentions are more volatile than SERP rankings. Only 49% of brands stayed visible across three weeks, while the rest either dropped out entirely or fluctuated week to week.

There’s an overlap between SERPs and AI mentions. 58% of brands on Google’s first page also appeared in AI responses.

SERP rankings don’t guarantee AI visibility. In the health niche, 30% of brands ranking on Google page one never surfaced in AI answers.

Product and lifestyle brands are the most volatile. 45% of product brands appeared just once in AI results, and 70% of smaller blogs dropped by week 3.

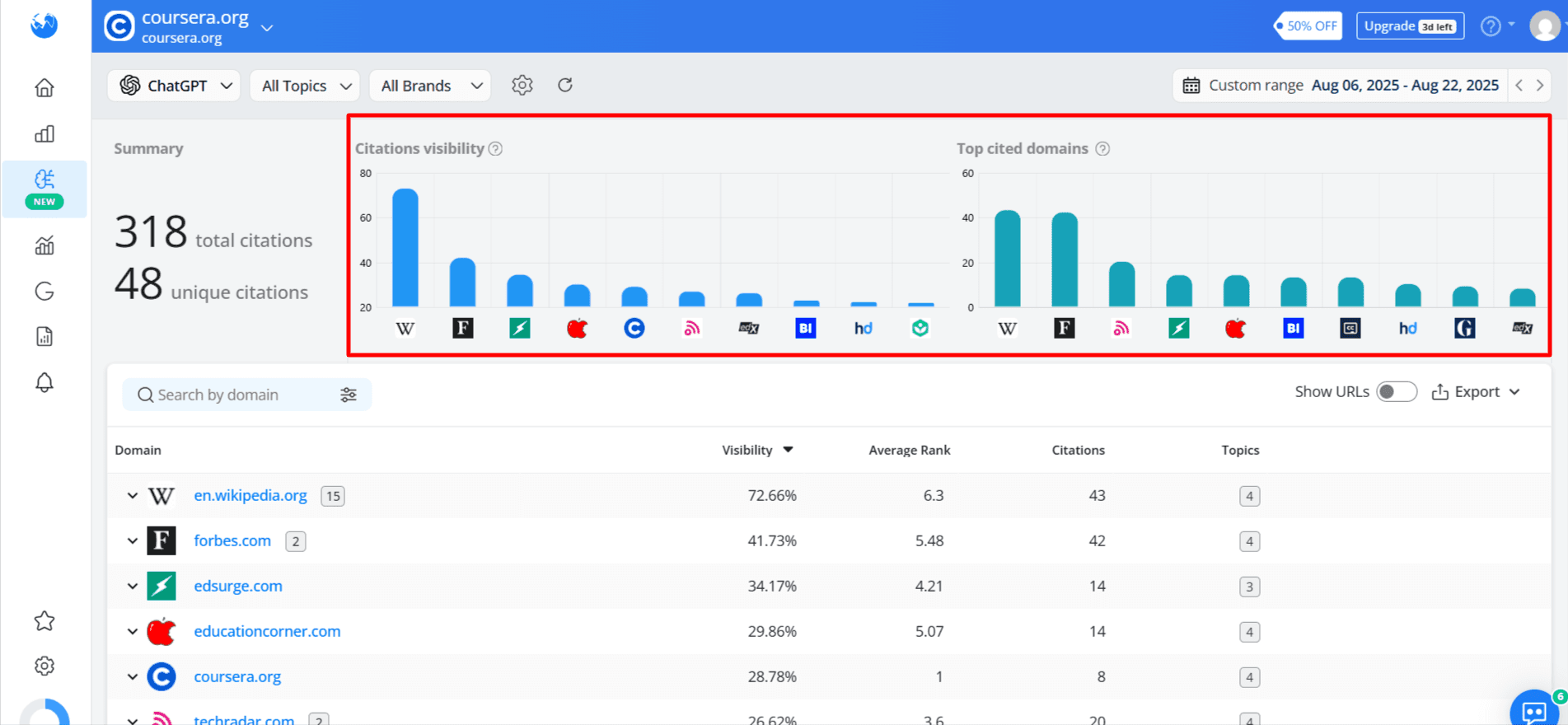

Sites with a mix of blogs, product pages, and authoritative content maintained mentions across weeks. Coursera grew mentions by 88% with blogs plus course listings, while single-format sites like EducationCorner and Consumer Reports lost 43% and 45% respectively.

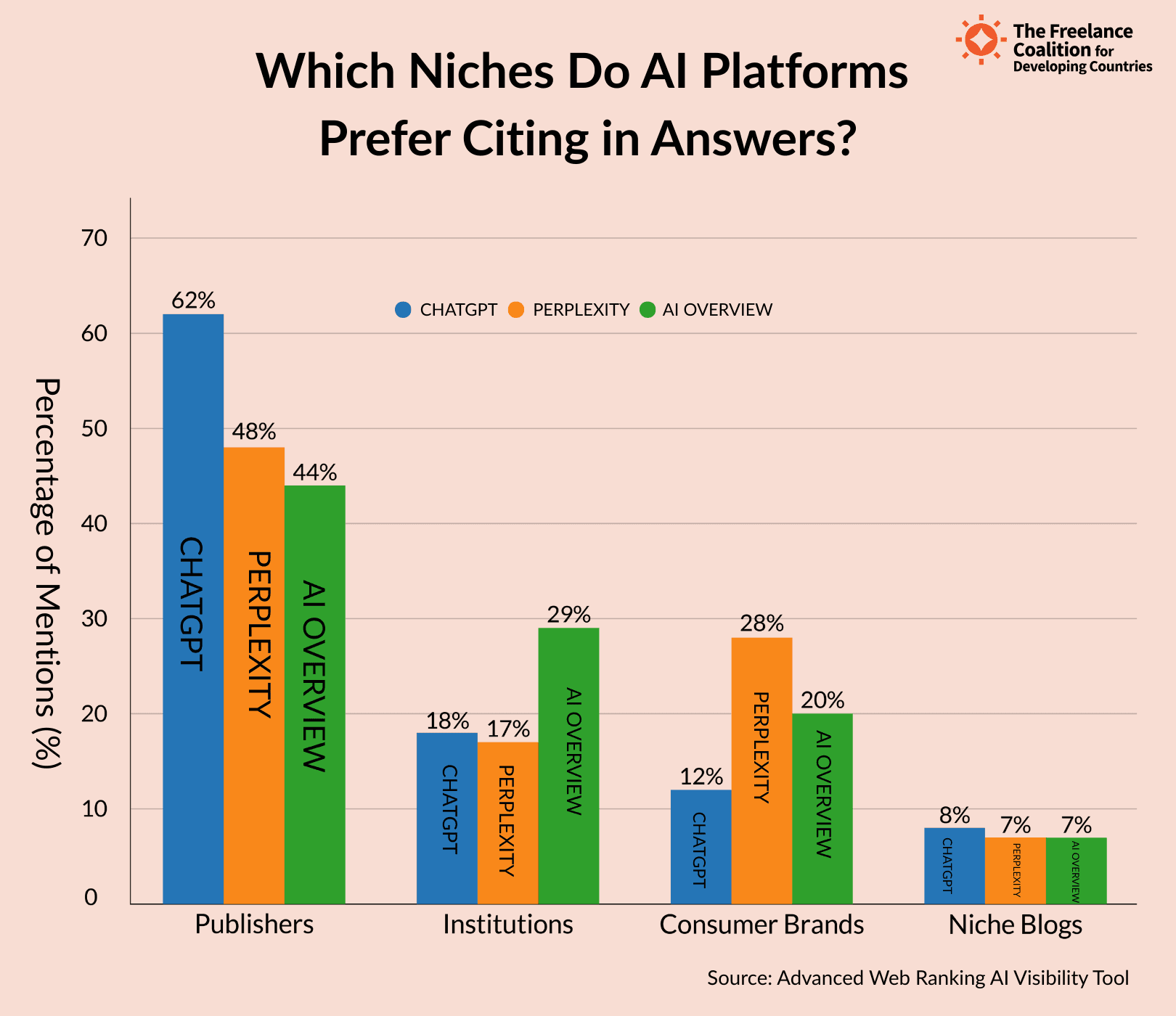

Visibility is fragmented across platforms. ChatGPT gave 62% of mentions to publishers, Perplexity elevated consumer brands (28%), and AI Overviews leaned institutional (29%)

Methodology

I tracked 481 websites across four niches (finance, education, health, and SaaS) over three weeks using the Advanced Web Ranking AI Visibility tool to understand brand mention volatility.

Scope of the study:

Niches analyzed: Finance, education, health, SaaS

Keywords: 20 per niche

Timeframe: 3 weeks

Platforms tracked: ChatGPT, Perplexity, and Google AI Overviews.

Data points: Combined brand mentions (unlinked) and citations (linked)

AWR’s AI Visibility tool collected weekly data on brand presence across all three LLMs. I then compared that data against Google SERP rankings (Top 20, US Desktop), with page one defined as positions 1–10.

Disclaimer: This study does not claim to be comprehensive across all queries or AI platforms. Interpret it as an indicative research rather than an absolute measurement.

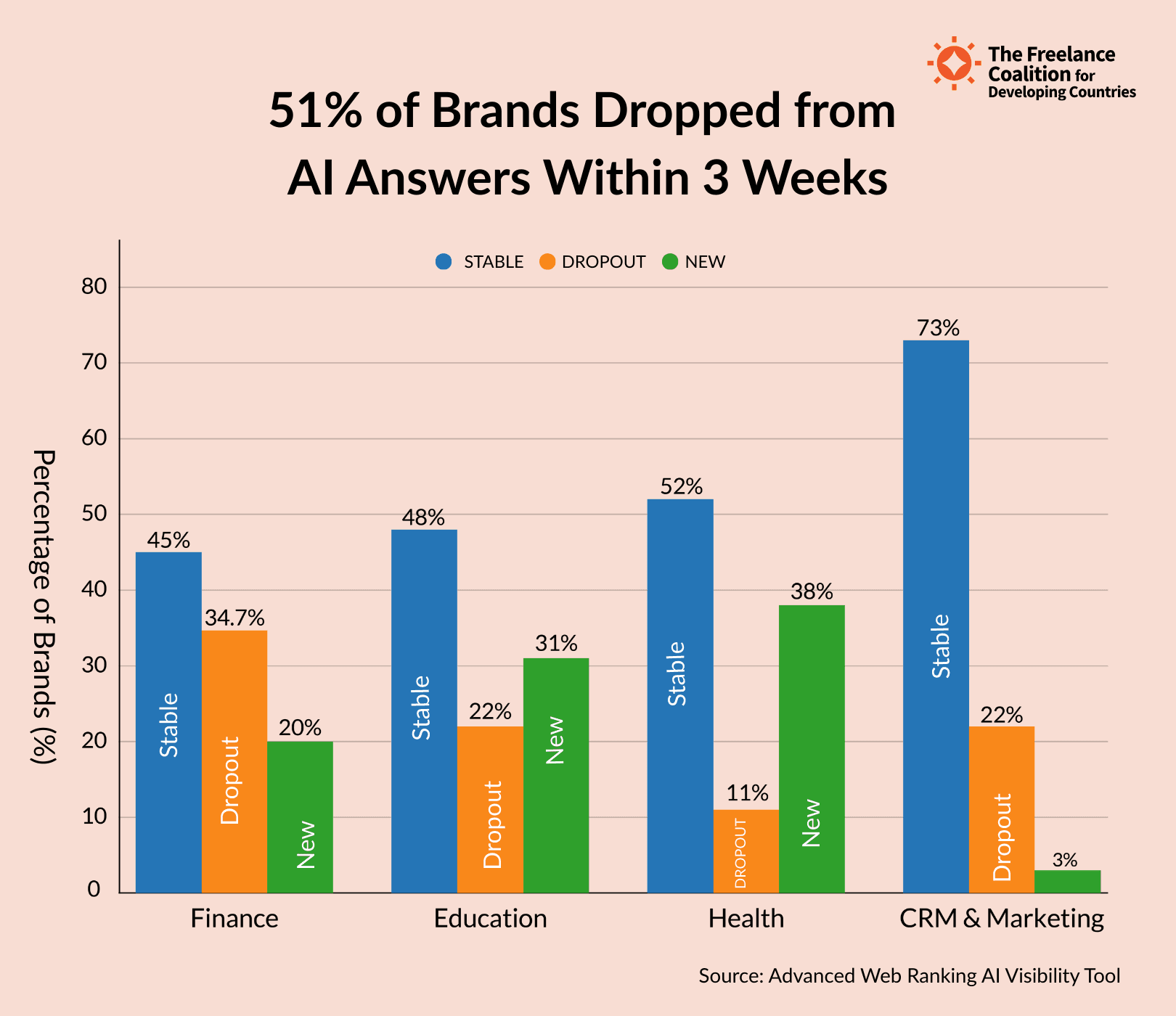

51% of brands dropped from AI answers in three weeks

Brand visibility in AI-generated answers changes fast, and not in subtle ways. Only 49% of brands remained consistently visible across all platforms over three weeks. The rest either dropped out entirely or appeared sporadically.

Finance brands saw the sharpest volatility, with a 34.7% dropout rate, while health was the most stable, losing 10.7%. This gap suggests that some niches are more vulnerable, depending on how LLMs prioritize trust, authority, and content relevance.

Smaller blogs, lifestyle publishers, and product-focused sites were the most unstable brands. These sites either appeared once or briefly gained traction before disappearing altogether.

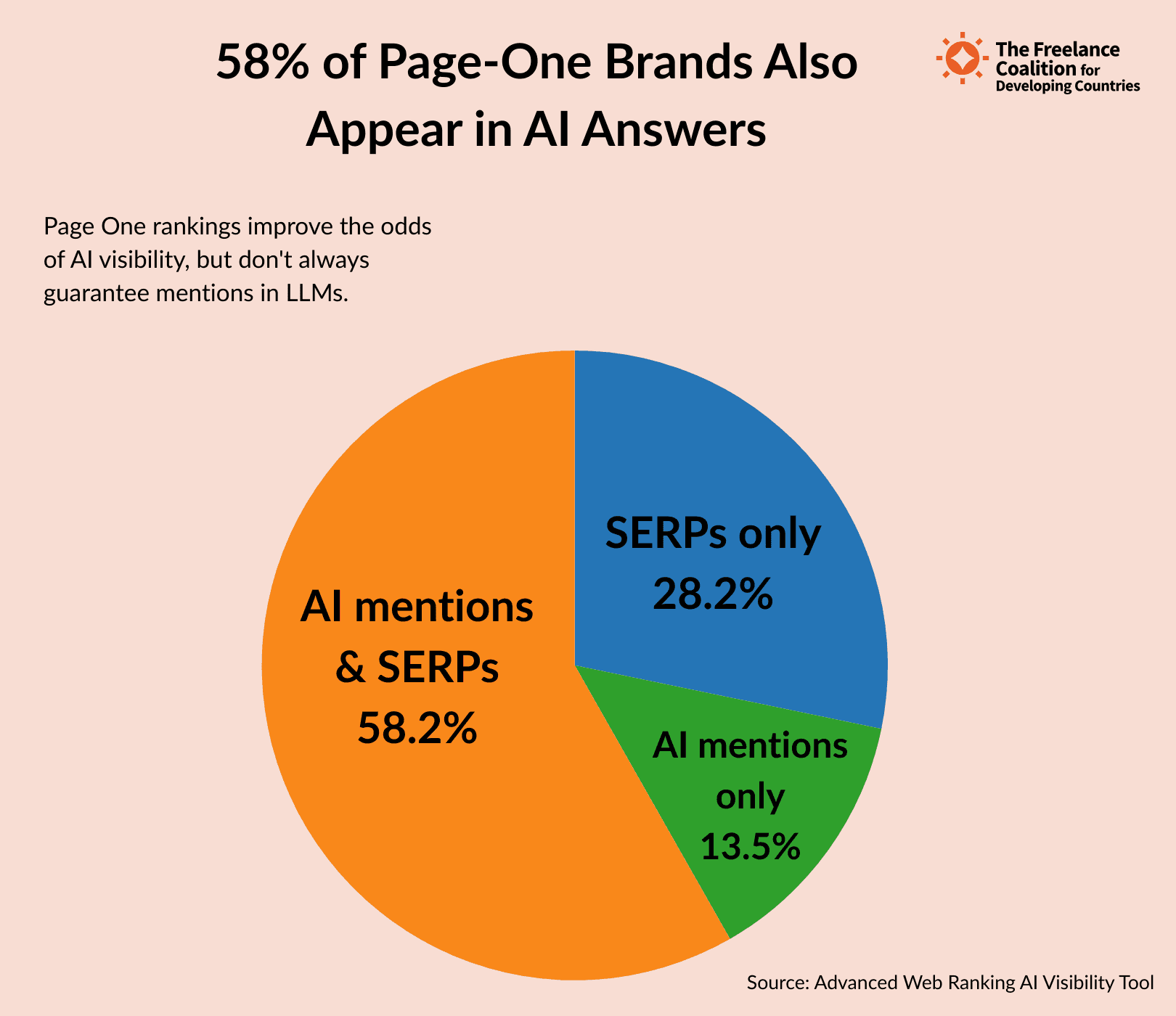

58% of page-one brands also appear in AI answers

Traditional rankings still carry weight in AI-generated results. 58% of brands that ranked on Google’s page one also appeared in AI answers, showing a significant overlap between SERPs and LLM visibility.

But the connection isn’t absolute. 28.2% of page one brands never surfaced in AI responses, while 13.5% of brands mentioned in AI didn’t rank on page one at all.

Movement in SERPs also influenced AI visibility. 61% of brands that lost rankings also disappeared from AI answers, yet some brands gained traction in AI even as their SERP presence declined.

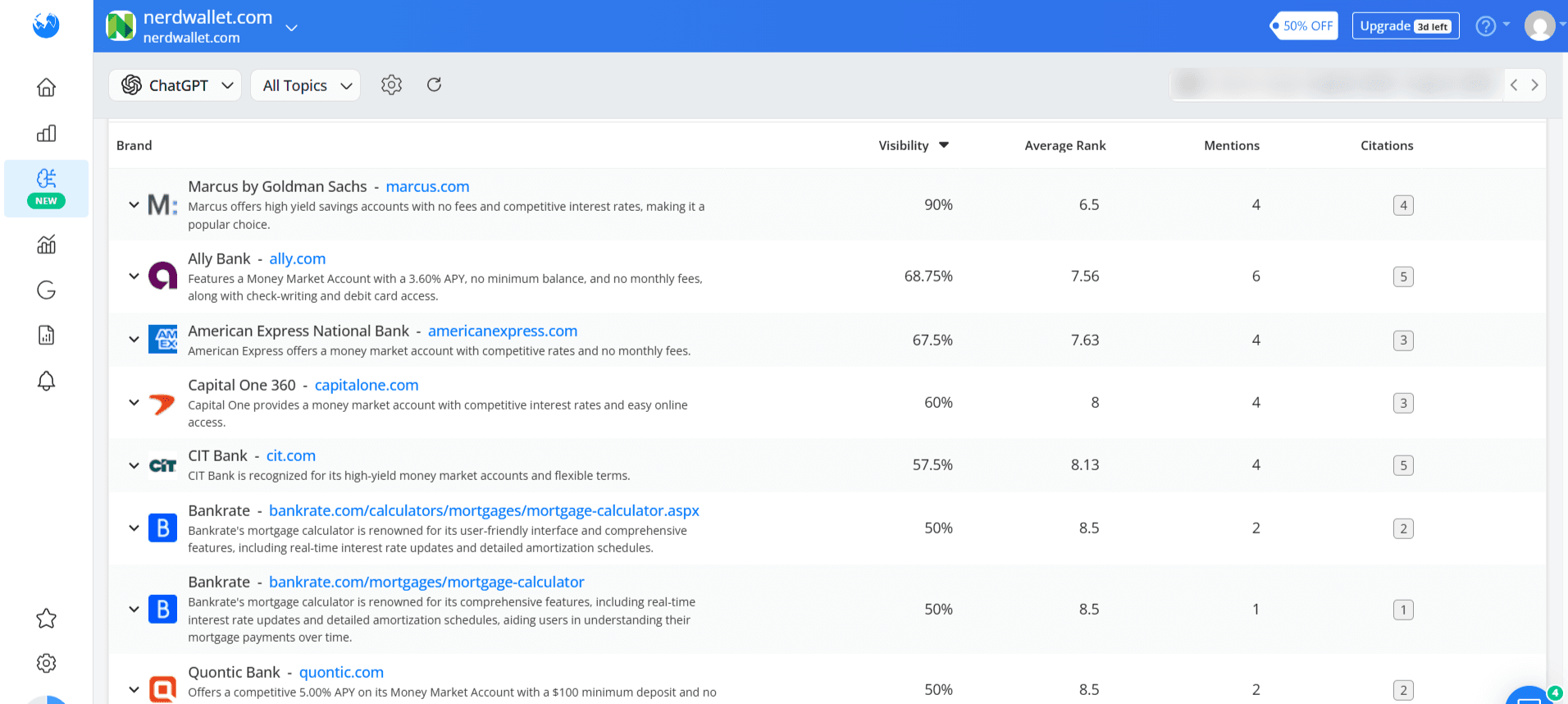

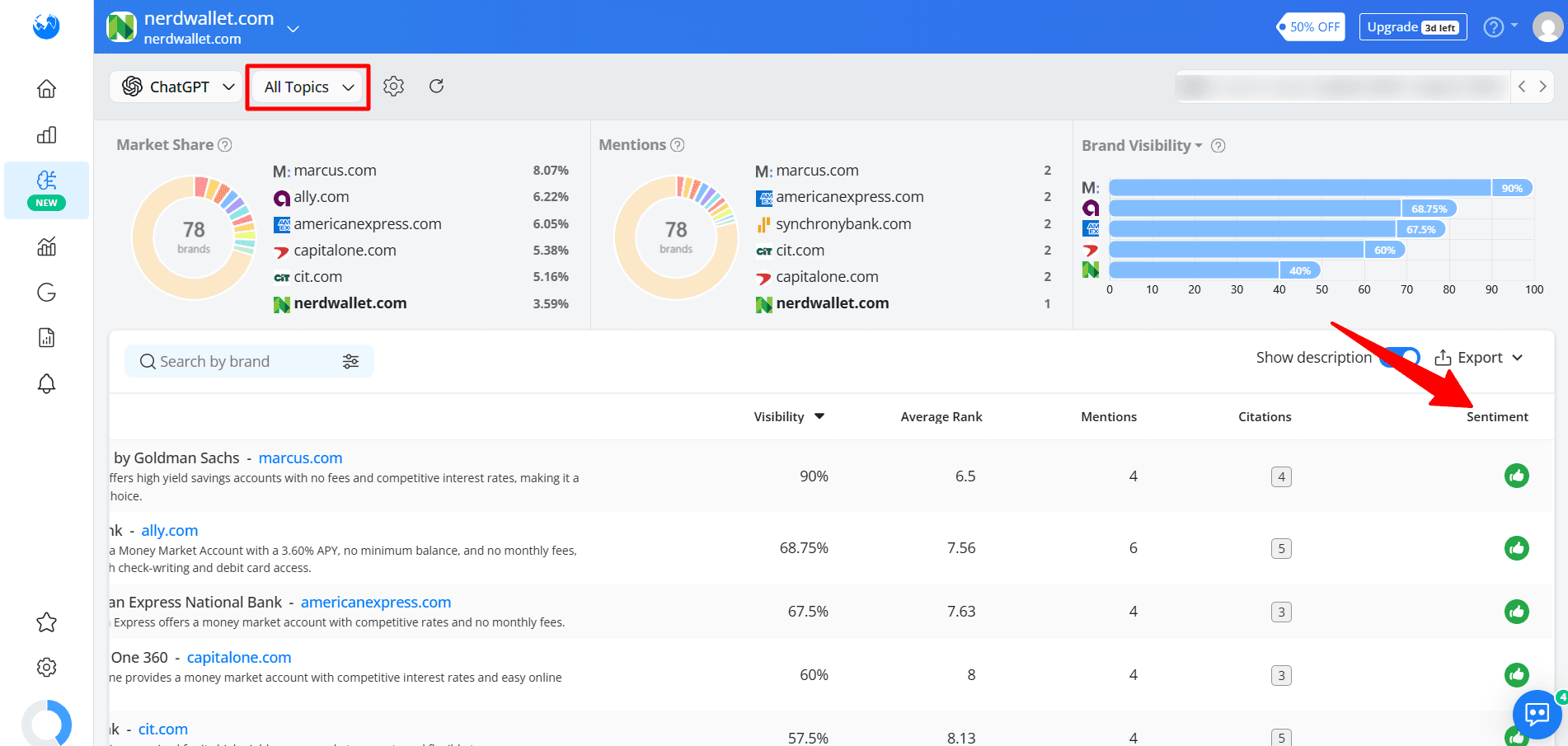

For example, NerdWallet and Healthline maintained strong visibility across SERPs and AI, while Consumer Reports and EducationCorner ranked well in search but lost ground in AI answers. On the other hand, brands like Ally.com and Coursera gained AI visibility even without consistently holding top rankings.

Page one rankings improve your odds of being mentioned in AI responses, but they don’t guarantee it.

Brands with diversified content formats enjoyed more mention stability in AI responses

Brands with multi-format content portfolios, such as blogs, product pages, and course listings, maintained stronger visibility across weeks.

Coursera, for example, paired course listings with blog content and grew its AI mentions by 88%. With both product pages and blogs, HubSpot maintained its position despite high competition.

In contrast, brands that leaned on a single format dropped quickly. EducationCorner, which relied mainly on blogs, saw a 43% decrease in mentions. Consumer Reports lost 45% of its visibility, while smaller lifestyle blogs and niche publishers disappeared almost entirely, with 70% disappearing completely by week 3.

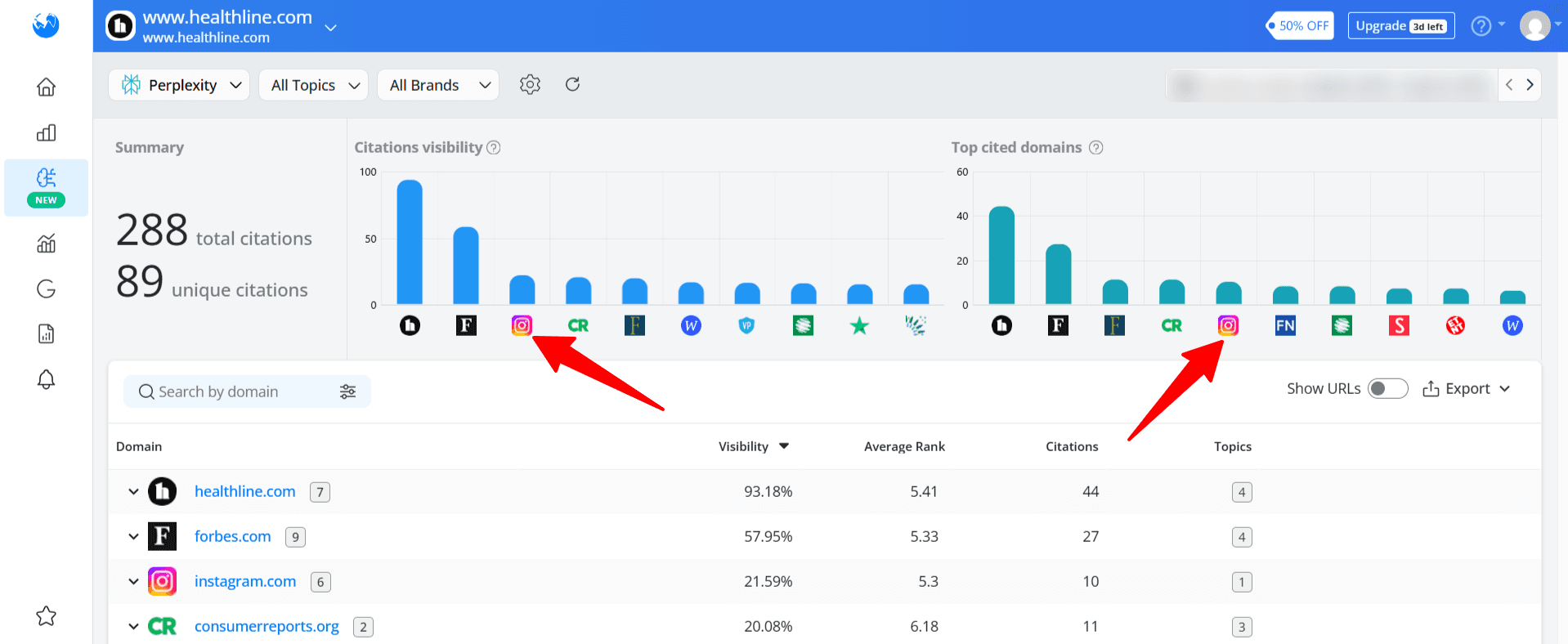

Note: LLMs like Perplexity now cite social media platforms like Instagram, signaling a shift to Search Everywhere Optimization where brand presence across social and non-traditional platforms influences AI-generated results.

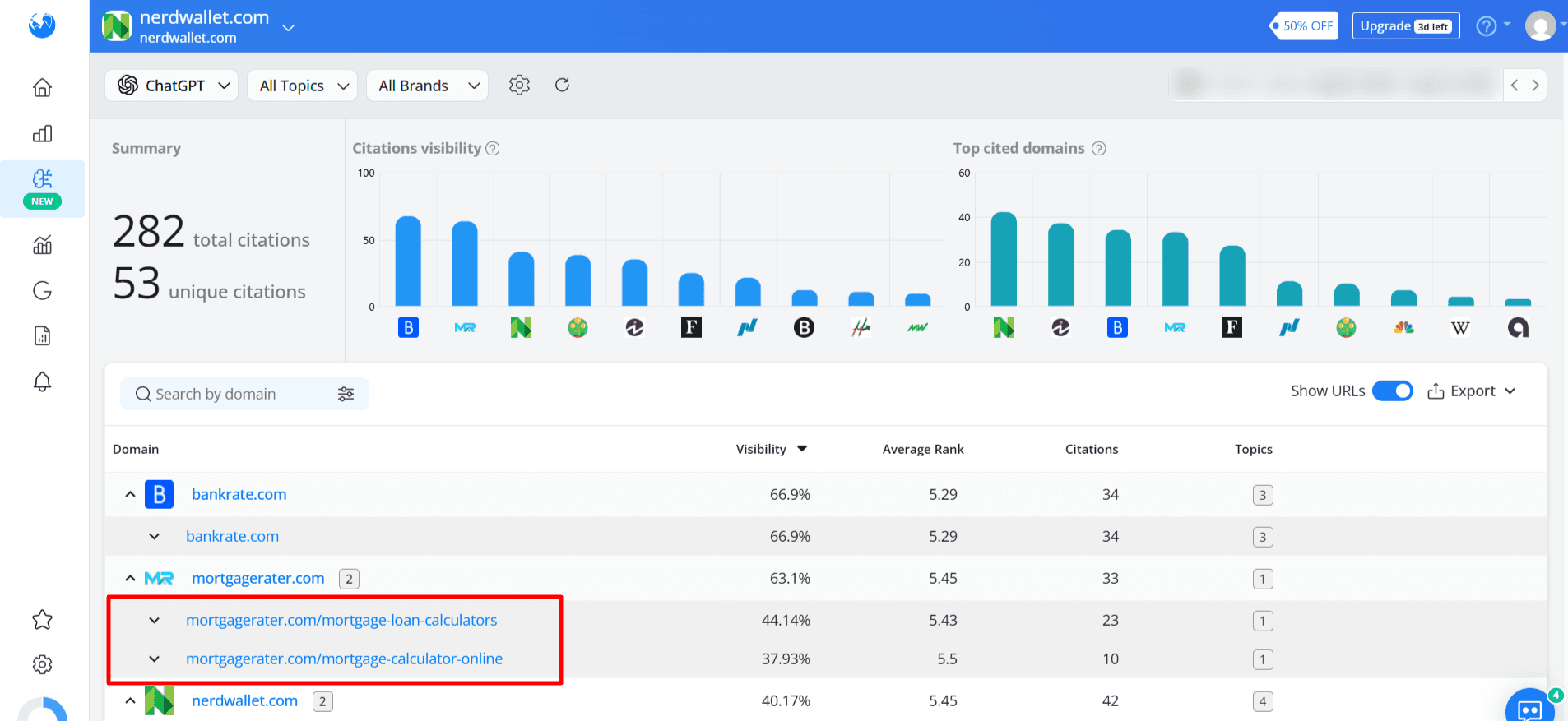

AI also favored deep problem-solving pages (e.g., calculators, templates, interactive tools, configurable checklists).

Remember that AI rewards content variety and multi-channel presence. Institutions and brands with balanced portfolios are less vulnerable to volatility.

Visibility in one AI platform doesn’t guarantee visibility in others

Each AI platform showed clear preferences in the brands and content it surfaced. ChatGPT favoured publishers, with 62% of its mentions going to established media and review sites.

Perplexity gave more visibility to consumer and product brands (28%), particularly in health and lifestyle niches. Meanwhile, Google’s AI Overviews was more balanced, with a heavier tilt toward institutions (29%), such as the Mayo Clinic and banks.

Cross-platform stability was inconsistent. AI Overviews favored institutions like Mayo Clinic, while publishers in ChatGPT, including Bankrate and Investopedia, saw double-digit declines.

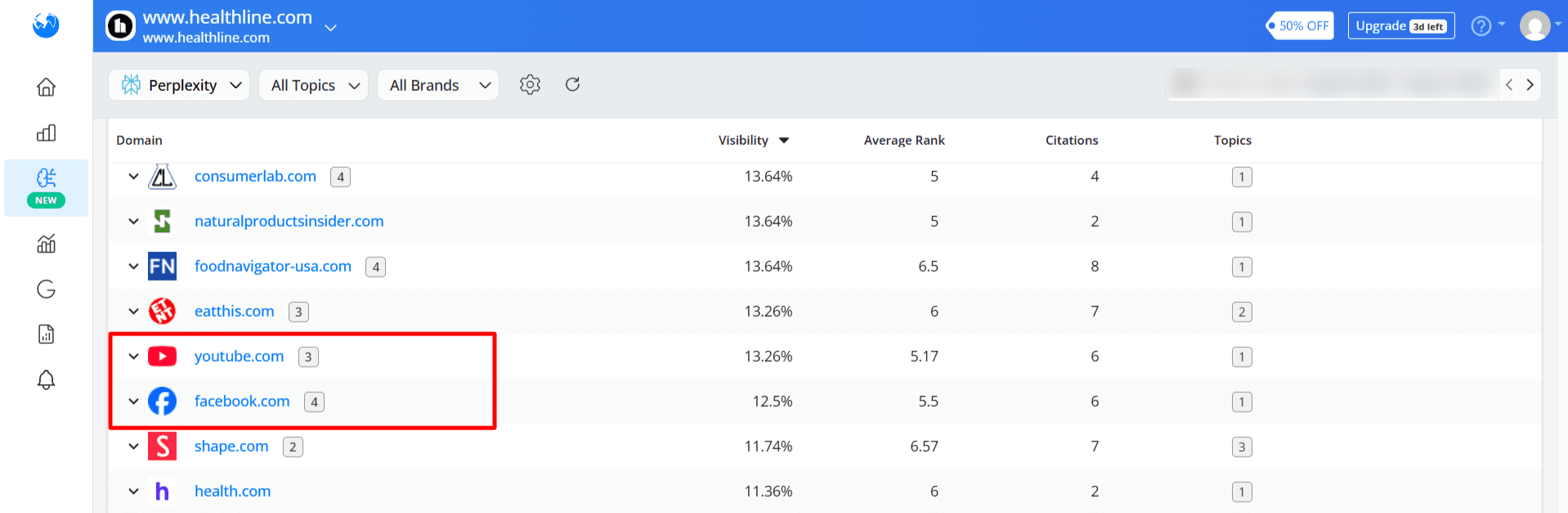

Perplexity was the most experimental, surfacing supplement brands and smaller banks with little to no SERP presence.

AI visibility depends heavily on platform bias. ChatGPT rewards established publishers, Perplexity experiments with emerging product brands, and AI Overviews leans institutional. For brands, visibility in one system doesn’t guarantee presence in another.

Most stable: Institutions in AI Overviews (Coursera, edX, Mayo Clinic, anchored across weeks).

Most volatile: Publishers in ChatGPT (Bankrate, Investopedia, lost double-digit mentions).

Most experimental: Supplement brands and banks (Vital Proteins, Discover Bank gained AI mentions despite weak or absent SERP presence).

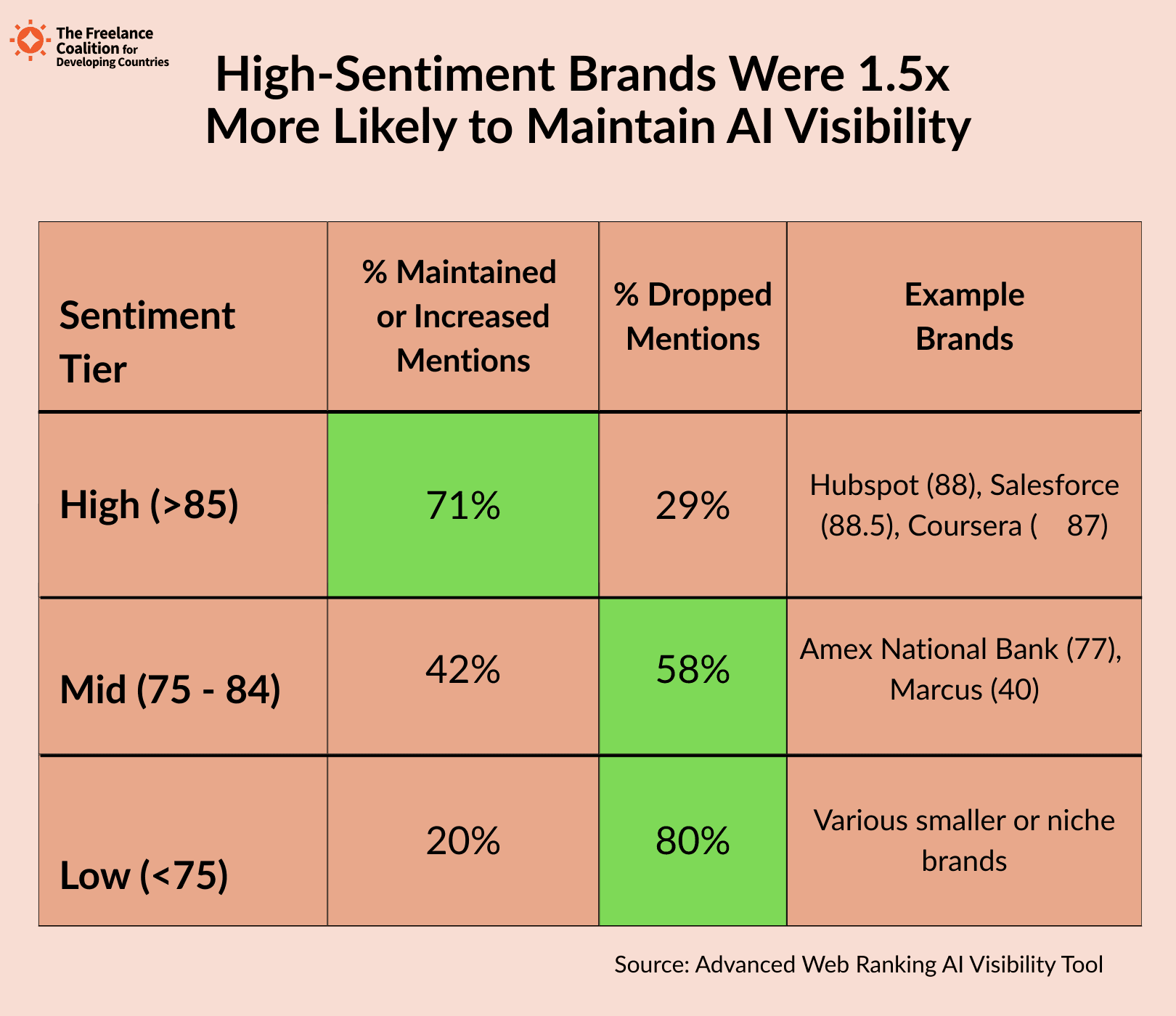

Positive brand sentiment leads to more stable AI visibility

Brands with a high sentiment score (85 or above) were more stable; 71% either maintained or increased their mentions over the three weeks.

In contrast, brands in the mid-sentiment range (75–82) showed greater volatility, often fluctuating or disappearing entirely. These brands weren’t necessarily less authoritative, but the data suggests that neutral or mixed sentiment may make them easier to replace in LLM-generated content.

AI models are trained on large, open datasets, including news articles, product reviews, and social media sentiment. That means your brand’s tone in public-facing content can significantly impact its visibility.

To improve sentiment, collect customer feedback, address friction points, and align messaging across all touchpoints.

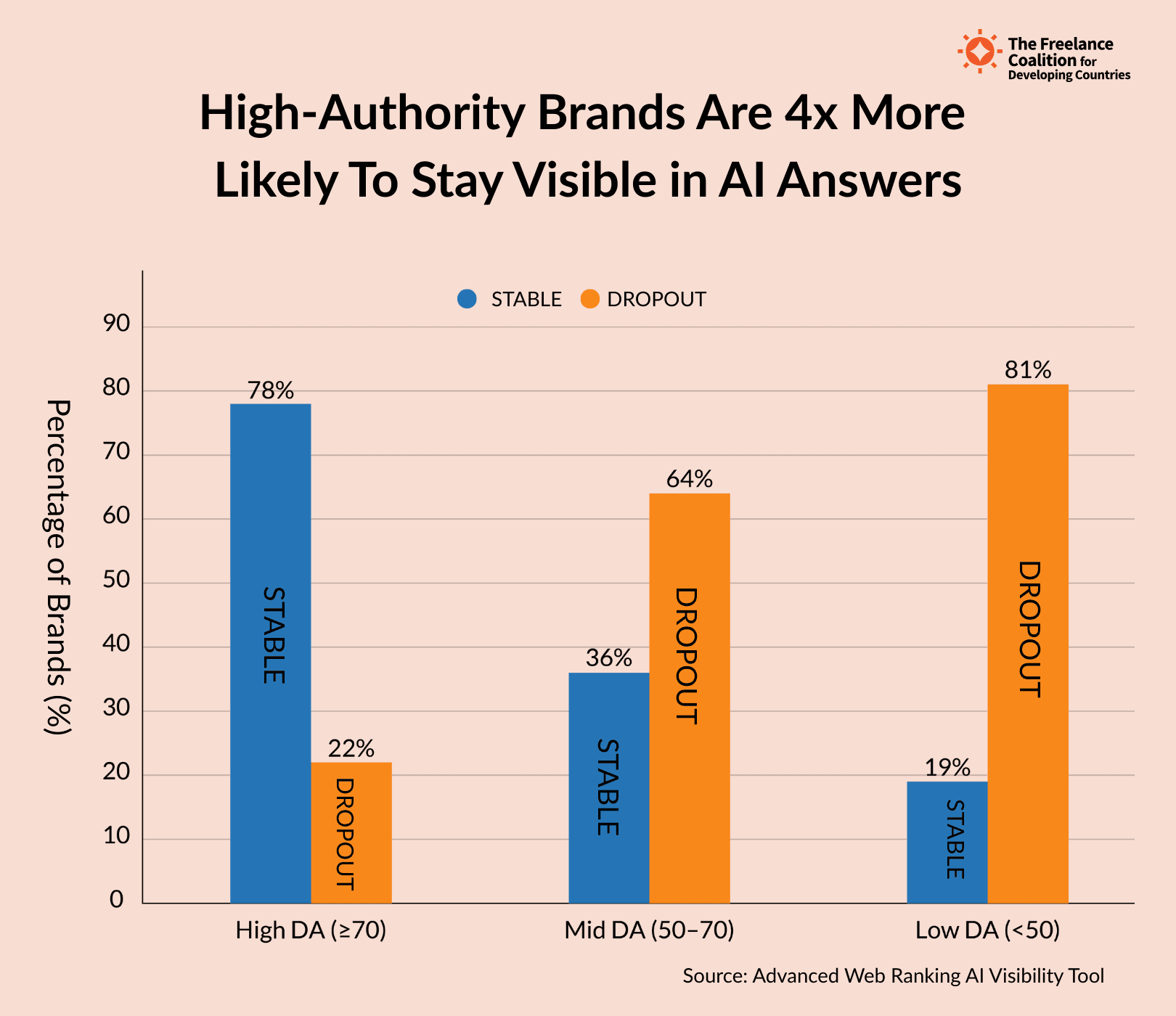

Brands with high Domain Authority enjoy more mention stability in AI answers

Domain Authority helps, but it isn’t a shield against volatility. 78% of high-authority brands (DA 70+) remained stable across weeks, rarely disappearing entirely.

Lower-authority brands had a much harder time. 64% of sites with a DA below 50 dropped at least once, often surfacing briefly before vanishing from AI answers.

LLMs value authority and relevance together. A strong link profile improves stability but must be paired with content that satisfies query intent to hold visibility over time.

Key recommendations

Based on the findings of this study, you should take the following steps to improve stability and visibility in AI-generated answers:

Pair authority with query-level relevance

High Domain Authority alone won’t secure AI visibility. Some authoritative sites lost ground when their content didn’t satisfy search intent.

A robust link profile is not enough to secure visibility. You need thought leadership content that has a pulse. Something new that doesn’t exist, whether it’s a strong opinion, data, or customer insights.

Diversify content types

Don’t rely on a single type of content. Consider audio-visual, written, interactive, and social content. Templates and tools also work well.

Brands like HubSpot and Coursera, which combined content formats, performed better in AI-generated responses.

The study also showed that Perplexity now cites Facebook in its responses. Before, LLMs cite Instagram and YouTube in their answers, indicating that video and social content play a role in AI visibility.

The more formats you cover, the harder it is to be replaced.

Monitor AI mentions as a KPI

SERP rankings and AI visibility don’t always align. Treat AI brand mentions as a metric in their own right and monitor them alongside search performance.

You can use the Advanced Web Ranking AI Brand Visibility tool to track your brand mentions across all leading LLMs and identify who they are mentioning alongside your brand.

Sign up for the tool and create a new project. Then, enter your keywords and competitors.

Next, choose your preferred LLMs and set the tracking report frequency. AWR automatically adds more SERP competitors for a broader view of your AI visibility.

For this project, the AI visibility tool updates the entire dataset of 481 sites and 20 keywords every week for all four niches.

It tracked brand mentions and citations from LLMs and mapped them back to the domains being surfaced.

Manage sentiment signals

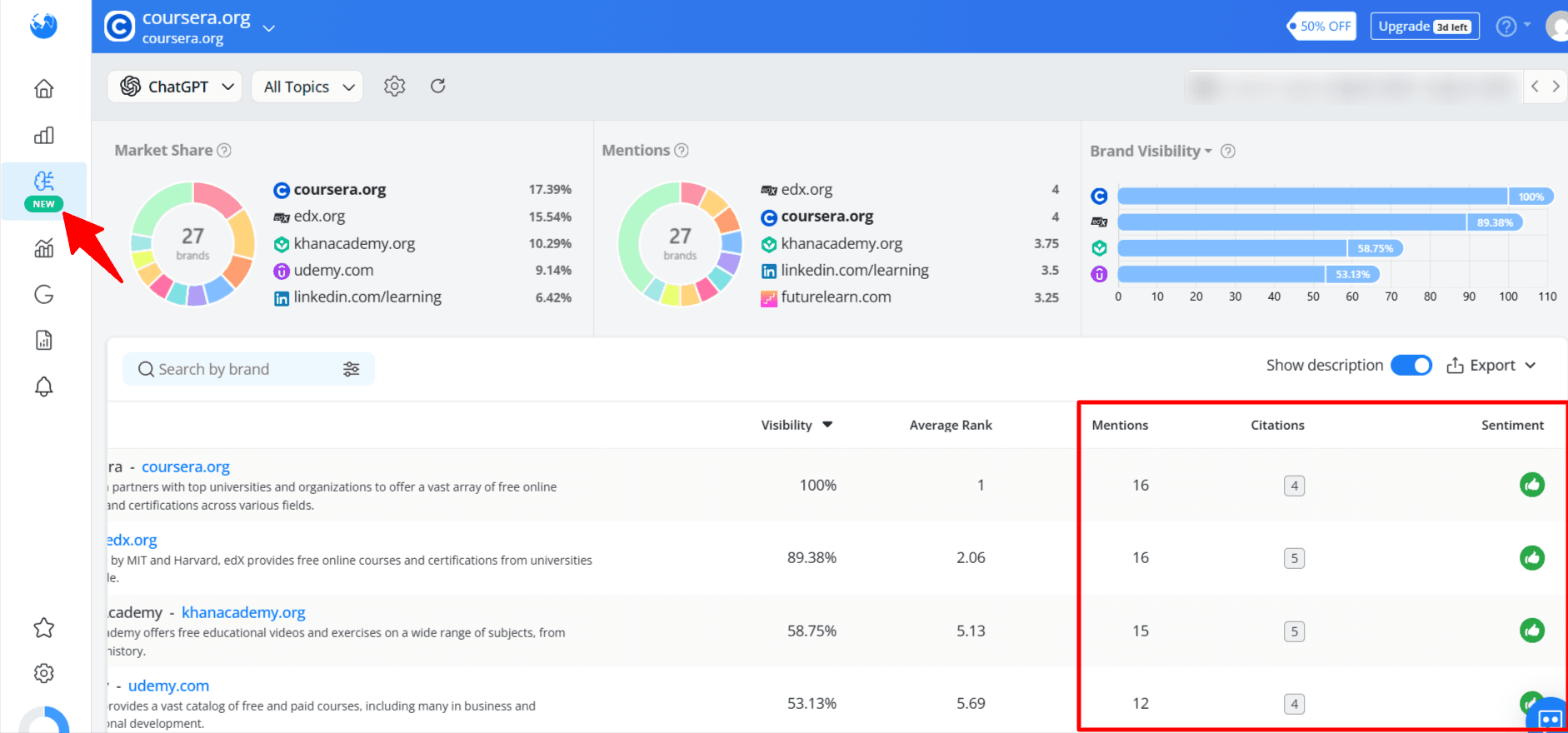

High-sentiment brands (≥85) were more stable in AI mentions. Track your brand’s sentiment to know how people talk about your brand online and make corresponding changes if necessary. You should proactively manage perception in sources that AI systems crawl.

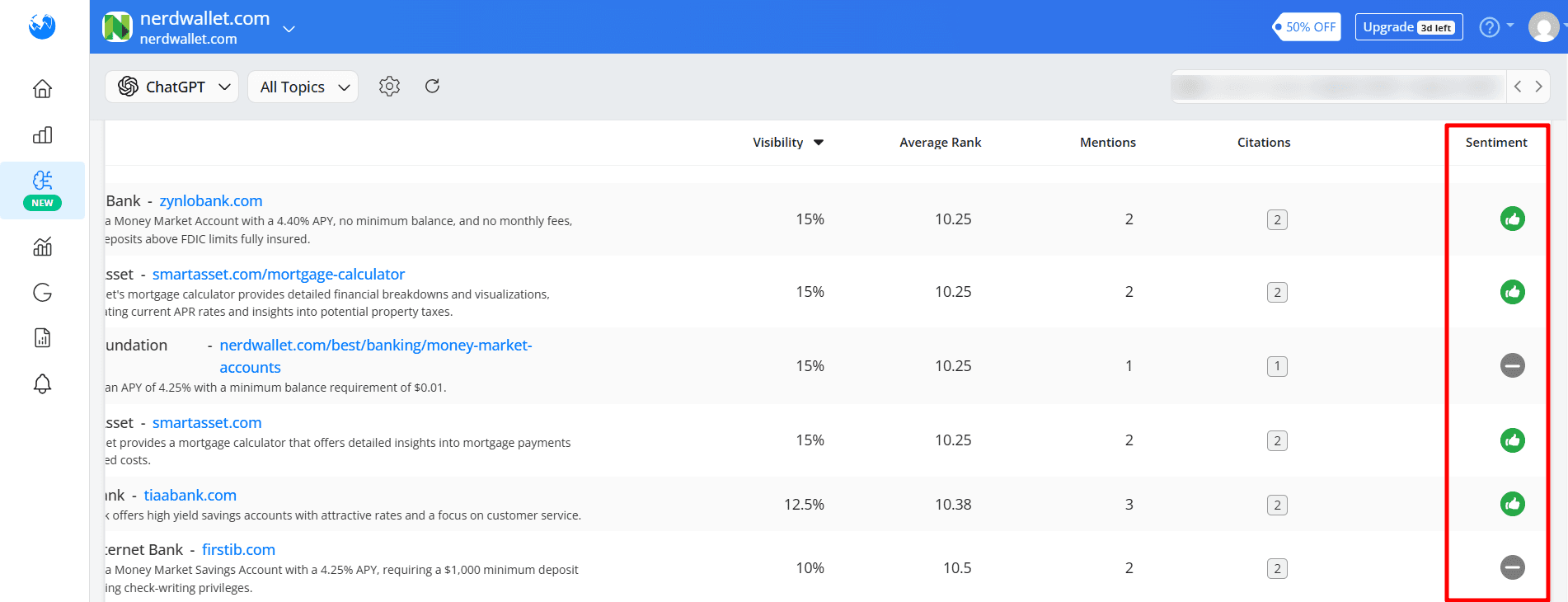

AWR AI Brand Visibility tool tracks sentiment alongside that of your competitors.

Go to the “AI brand visibility → Brands” section and check the right corner of the dashboard. You can also view the cumulative sentiment of all tracked topics or segment it by topic.

The green thumbs up signifies a positive sentiment, the grey neutral, and the red negative sentiment.

Concluding thoughts: Treat AI brand mentions as a core visibility metric

This study revealed that AI brand mentions are highly volatile. That’s because AI visibility doesn’t mirror SERPs, and ranking on page one doesn’t guarantee AI mentions.

To stay visible, track AI mentions and build a diversified content engine that strengthens brand authority and perception.

Sign up for Advanced Web Rank AI Visibility to track your AI brand presence today.

Article by

Success Olagboye

Success Olagboye is a content strategist and writer who helps SaaS brands increase their organic traffic and revenue through customer-focused content. He has created successful content assets for leading European and American SaaS brands.

Success loves to explore nature when he is not working.